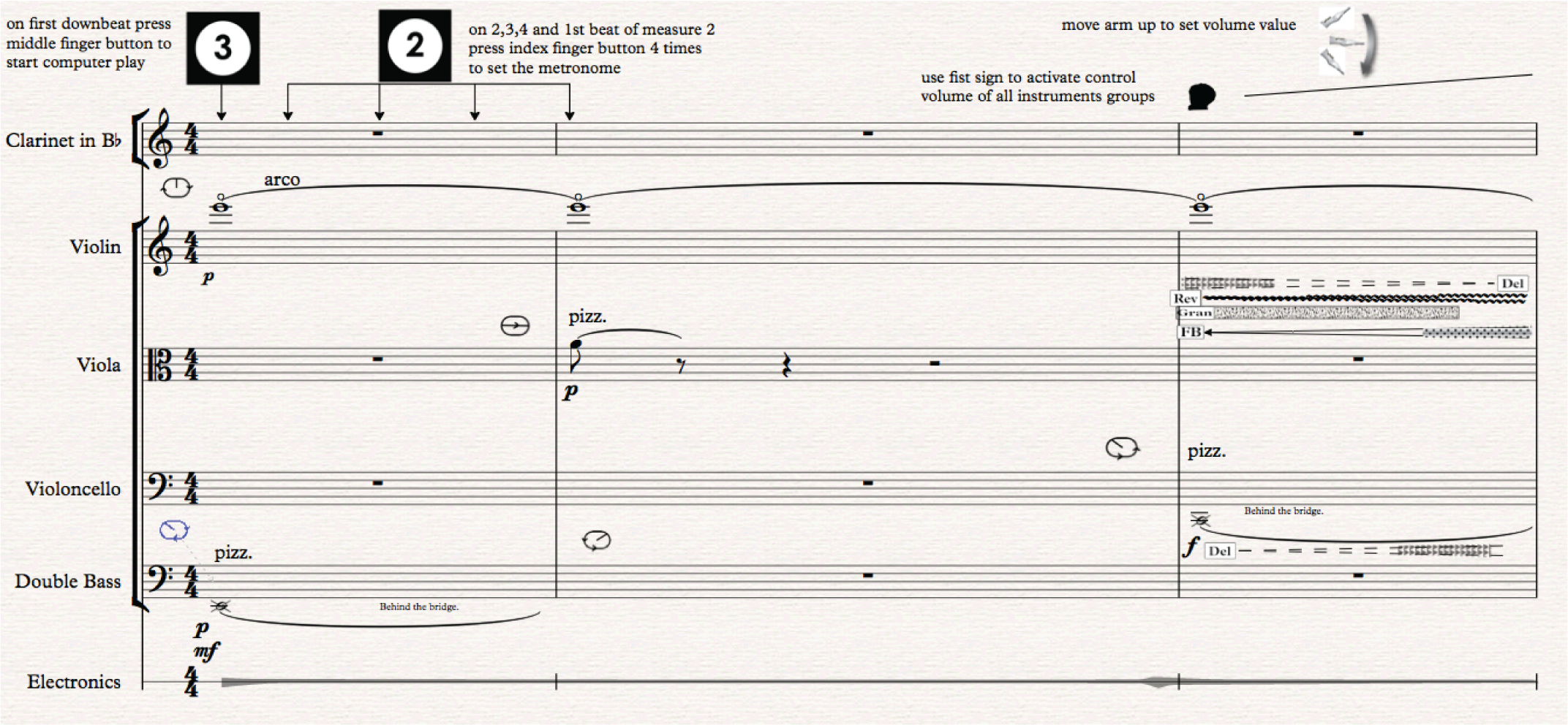

In my blog Closer look at the Score dated 1. March 2018, I did analyze conducting instructions in the opening measures of Kuuki no Sukima. How the conductor had to press the start button (3rd. button) and then the tempo button (4th. button) four times to set the tempo and finally close her fist to activate the volume control.

Sonic analyzes

In this blog, I will focus on the sonic part of the concert, ie. how the sound of the instruments interacts with the electronic sound effects of the computer. I will, as far as it goes, try to showcase the relationship between written notation and a sonic experience. How I use traditional notation in conjunction with “traditional” graphical interface of music software applications. Commonly called “Automation” a control whose value changes in the course of a timeline that is automated.

So, once again let’s take a look at the opening measures of Kuuki no Sukima.

In the opening measures there is no use of electronics and therefore there is a clear dry sound of the instruments until the conductor activates the volume control by closing her fist and raise her arm. At that point, approximately at the beginning of measure three, the conductor increases the volume of the electronic sounds from cero or no electronics to the level she wants the electronics to be. This is a slight change from the original version where the electronics were supposed to be from the beginning. Why these changes? As mentioned in an earlier blog, this was done to simplify the actions the conductor had to perform at the beginning of the work. Aesthetically it worked out to be a stronger beginning and gave the composition more expressive opening with the electronics fading in with a visual realization or gesture of the conductor raising her arm.

Notated Score and the DAW Interface connection

As can be heard the sound of the Violin start to change in 3rd. measure as soon as the conductor raises her hand. The sounds of the other instruments, the pizzicato with a delay in Cello and Viola. The fast airy note pattern (arco battuto) in the Double Bass, where delay and granulation are increasingly affecting the sound is not as clear. Why?

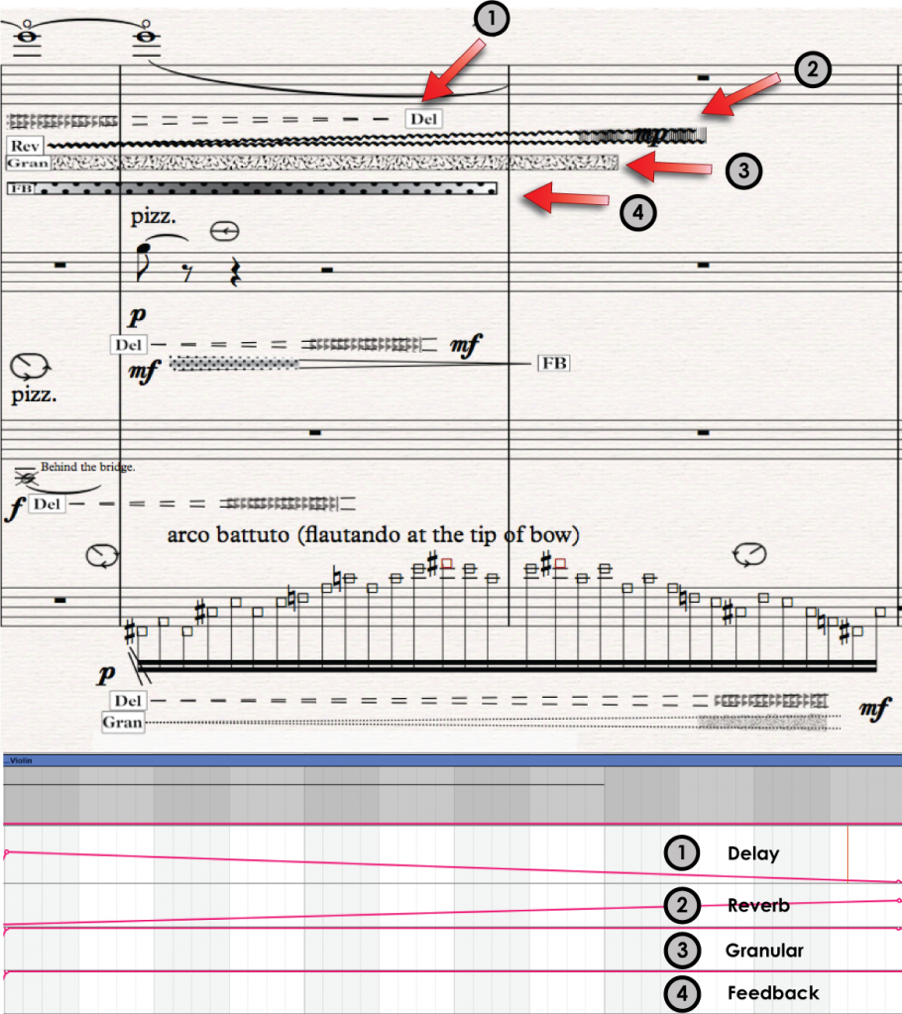

Lets first take a closer look at the score and the electronic score to figure out how things are connected or related.

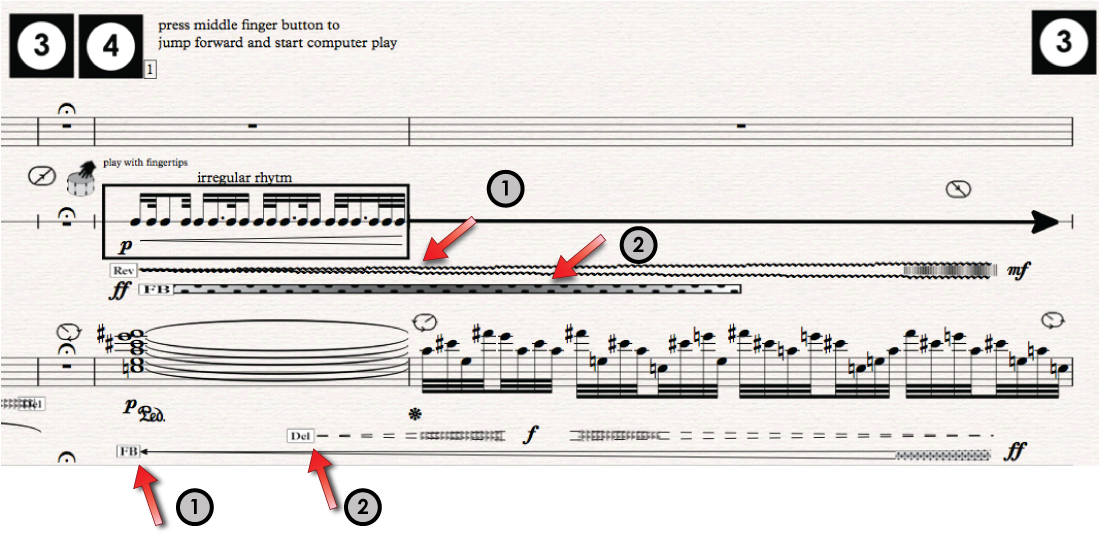

The above illustration shows the connection between the notated score and the graphical interface of the computer application or DAW in short for Digital Audio Workstation. Focusing on the Violin part, the computer electronics are added to the high opening note (E).

- Delay with diminuendo (decreasing volume) from approximately mf to silence.

- Reverb fades in with increasing volume to approximately mp (relatively little reverb)

- Granulation starts with ff or very strong and stays unchanged.

- Feedback starts with ff (very strong) and stays unchanged.

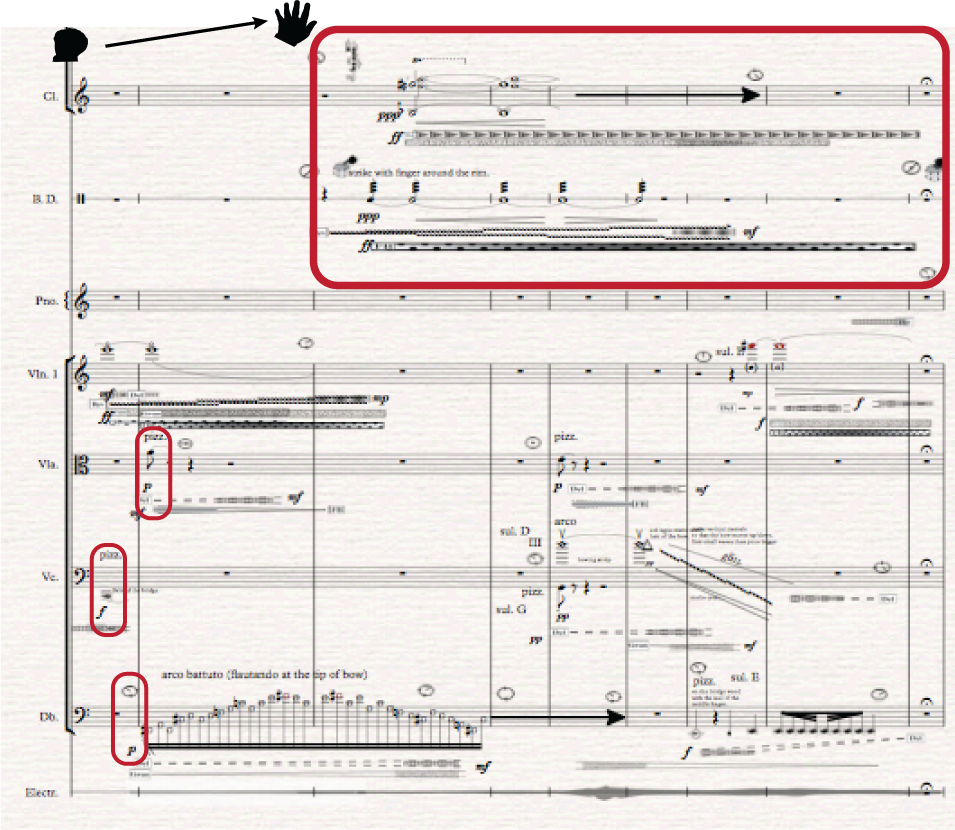

The above figure shows how the other instruments, Viola, Cello and Double Bass add effects that increase or decrease same way as shown the Violin part. But how come they are not as easily audible as the high Violin pitch?

The above figure shows how the other instruments, Viola, Cello and Double Bass add effects that increase or decrease same way as shown the Violin part. But how come they are not as easily audible as the high Violin pitch?

Fist the loud pizzicato in Cello in measure 3 comes right at the beginning of the conductor’s increase of the electronic volume. Therefore the expected delay effect that is written in the score is not audible. The following Viola pizzicato in measure 4 has an increasing delay and decreasing feedback. It can hardly be heard most likely because the pizzicato is soft (p) and the conductor still hasn’t raised the volume to its maximum value. For the same reason, Double Bass fast airy note pattern starting in measure 4 is not very clear. It should also be mentioned that the volume of the Double Bass is too soft and will be adjusted in next revised version.

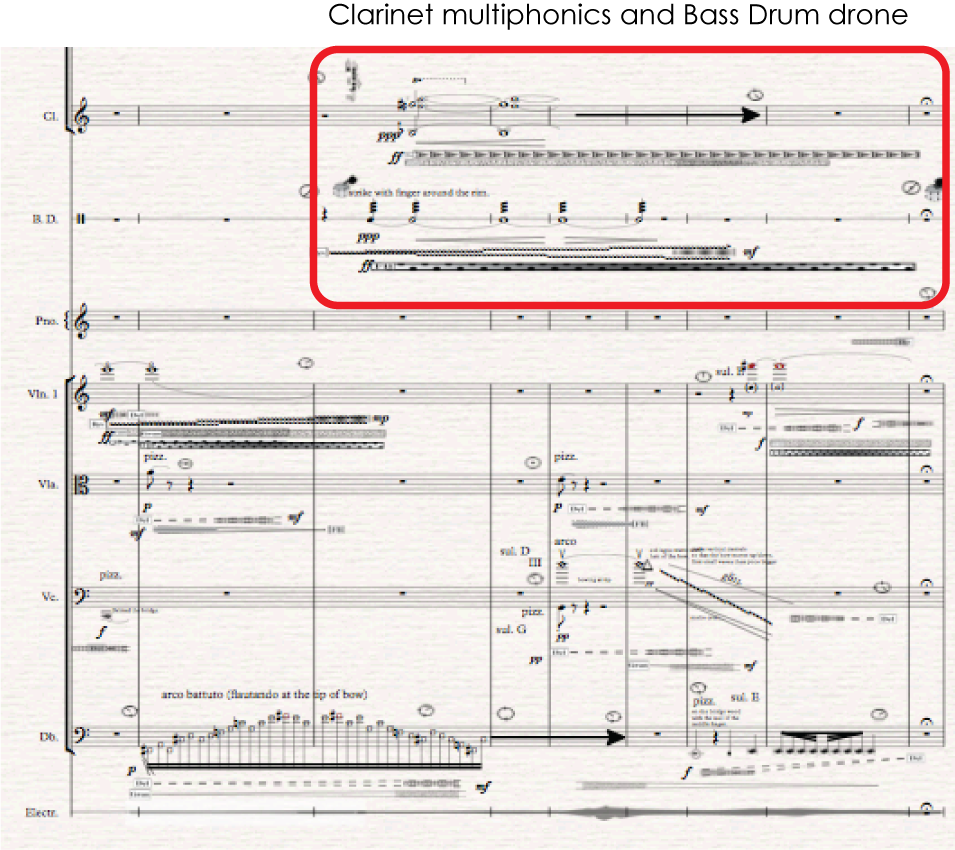

The use of electronic effects in Bass Drum and Clarinet entrance in measure 5 should be more audible and closer to the sonic spectrum that is expected. Especially the Clarinet, since although written ppp (very soft) is played louder than for instance the Double Bass. Keep in mind that the Clarinet can´t play the multiphonics very loud and therefore the ppp indicates as soft as possible (should be written in the score). Similarly, the Bass Drum can´t play the finger strike very soft although softer than the Clarinet. The Clarinet multiphonics gives a very rich sound that is high in frequency that should be ideal for picking up electronics, unlike the Bass Drum that has a low-frequency and therefore less audible affectation.

For some reason, that might be related to the choice of effects, the Clarinet multiphonics, and the Bass Drum finger strike seems to pick up very little electronics, much less than expected. The intensity, for instance, Delay and Granular in Clarinet are written ff or very strong that should give maximum effect. It could be my mistake to write a Delay for a sustained note since it can only be delayed at the beginning and I might have to take a closer look at the granulation. For the final version, I will change the Delay effect with a Feedback effect which should give richer sonority as well as adjust the granulation.

Instrument – electronic sound relation.

Here we come to very interesting complications. First of all, the harsh reality of working in the media of mixed music, where there is very often a very little time to work with the performers. It means that tests have to be done by computer simulation. Although I did meet with most of the players during the preparation and composing period I did not have time to adjust the electronic sound effects. Most of the time with the performers was spent working on the extended instrument technique, both the physical aspect and the notation as well as the sonic, how this and that did sound in practice. These are all very time-consuming factors and although the performers were all very positive and helpful there was hardly any extra time for adding the electronics.

Working with Violinist Ina

Same happened during the rehearsal period before the premiere performance in November, most of the time went getting the right acoustic sound without the electronics. It was not until the last rehearsal that the electronics were added with a sigh of release and surprise from the performers. I am not sure why this happens but it seems to happen very frequently in the world of mixed media. One obvious factor is that usually the electronic equipment, loudspeakers, microphones, mixer etc. are not in place until the last minute. But that was not in this case since all equipment was in place right from the beginning. The fact that there were three other works on the program that took significant time to rehearse left too little time. The fact that the conductor is classically trained might have something to do with it. She did spend a lot of time getting the right sound without the electronics, the right balance and other expressions that are important in classical performance. Perhaps the fact that she had to wear a glove to conduct the electronics, the fact that she had to press buttons to move or jump to right markers in the score. The fact that the conducting glove could be more user-friendly. For instance, you could not jump to whatever measure you need to practice or in other words the flexibility of the technology was not good enough. Maybe it was the conductor’s theory, that if the instrumentation was correct then the electronics would be correct a theory that I agreed upon at the beginning. Looking back it might have been a failure since it turned out that the performers did complain that they did not have enough time to learn how the electronics would react towards their performance. The ideal situation would have been a week-long workshop focusing no Kuuki no Sukima only. But that situation is rear and we have to keep in mind that the aim of the research was to create a musical tool for conductors that would be easy enough to use under “normal” situation. Therefore, one could say that the ConDiS prooved to be a successful tool since the conductor did manage to conduct and control the electronics during the performance.

Since all the electronic sounds are totally related to their instrument, there to say the electronic sound of the Violin is based on the sound the Violin is playing at that time. In other words, it is a live real-time sound processing of the Violin. Therefore, if the Violin plays a soft note the electronics are going to be soft and vice versa. Low frequency its going to sound different than a hight one.

This is problematic since although there is written an ff, meaning a very loud use of the effect, it does not mean that the outcoming sound is going to be loud. It means that the electronic sound is having a large effect on the played tone whether that tone is loud or soft.

In measure 5 the conductor has increased the electronic sound level to the desired point. Keep in mind it is totally up to the conductor to adjust the volume like she does when conducting the “other” instruments. Soon thereafter the Bass Drum and Clarinet start to play. It is possible that the conductor has not adjusted the volume level loud enough but by comparing the three different performances is seems not to be the fact. Let’s look and listen to an example.

Example measures 5-11 from Harpa Concert

Example measures 5-11 from Torshavn Concert

Example measures 5-11 from Copenhagen Concert

As said before here I was expecting more sound processing, especially in the Clarinet. Why? The Clarinet is playing multiphonics that has relatively rich and high pitch. Therefore the electronic sounds should be clear. It is a bit different with the Bass Drum that is playing a deep drone. Although the multiphonics for the Clarinet is written as very soft (ppp) it is not possible to play them much softer than we hear on the recording. This is also a very good example of the inaccuracy of classical note writing since the ppp means as soft as possible but the relative loudness is more like and mf (mezzoforte) or medium loud.

Other facts that might count is that the conductor’s adjustment of the volume level is not right. The Violin that comes in measure 9 supports the theory because there the conductor has increased even more the electronic sound, and therefore it is much more audible.

Let us now see and hear the opening measures from the Harpa performance with score and illustrations.

Measure 11 – 14.

In measure 11 there is a general pause where the conductor stops the play button of the electronics by pressing the 3rd button. After the pause, the conductor continues by pressing the 4th button which jumps the playback-head of the DAW to exactly the beginning of measure 12. That synchronizes the DAW and the notated Score so that the Bass Drum and Piano duo should be exactly in sync with the effects of the electronics.

As shown in the figure above Bass Drum comes in with increased reverb and strong feedback effect while the piano has increased feedback, as well as increased —> decreased delay.

Bass Drum – Piano

- Reverb crescendo to mf (mezzoforte) medium loud. Feedback with unchanged ff (fortissimo) strong effect.

- Delay crescendo to f (forte) —> diminuendo to cero. Feedback from cero to FF (fortissimo/very strong).

Now, look at the automation for the same measures as written in the automation of the DAW.