Experiments via Trial Compositions

Early in the research process, it became apparent that it would be necessary to study practical and artistic intentions of the research.

Practical factors with a specific focus on:

- Develop conducting instrumental tool. A conducting glove adapted to the conductors traditional conducting movements.

- Develop a method for synchronizing the interaction of live instruments and live computer-generated sounds (DAW).

- Develop a musical notation (graphics) method for communicating visual and audible information i.e., musical score.

Artistic factors with a specific focus on:

- Musical interaction between the conductor conducting gestures and audible reaction.

- Musical interaction between the conductor and the performers (ensemble).

- Extended instrumental sonority through the use the ConDiS system and sonic changes through instrumental notation and digital audio workstation (DAW).

To conduct my research, I found it necessary to write experimental music pieces that I choose to call Etudes. The intention with these experiments was to develop and create notated instructions of an expanded sonority. The fact that they were not composed for public performance gave freedom necessary to stretch the limits and go beyond classical boundaries. These experiments enabled me to intertwine acoustic and electronic sounds parallel to my compositional methods.

Notation, Symbols and Signs

As a classically trained composer my interest lies in the field of writing down my compositions. I like the process of playing with my kids called notes[1], select carefully which of them I am going to introduce in my composition. I like to make decisions, how I tread them, give instructions, how to play with them, decide what is the flourishing tempo for them, decide what are the flourishing dynamics for them, etc. I like to wonder and figure out the fundamental question in music composition, how can I give them their moment to express them self and shine? All this I like to do through the magical transformation from being dots on a piece of paper placed carefully by the composer to divergent soundwaves created through and interpreted by the musical performer.

Music notation is for me a collection of letters where I can write my own personal language when trying to gain a more organized and coherent idea of what I want to create.

Similarly, it is no less critical for me to treat the electronic sounds, therefore I needed an electronic notation dictionary. A collection of signs and symbols that I can understand and somewhat hear, touch and feel. Yet understandable for the conductor and performers to follow.

A relationship between what is “heard” and what is “seen” in the score is a fundamental issue. Therefore, it was important to come up with graphics for the electronic effects that are related to well-known notational graphical signs. Being in harmony with own theory to re-use and adjust already accepted signs instead of innovating.

How can I interpret the electronic sounds making it easy for the performer and conductor to follow and understand? In my search for an answer, various experiments were made including testing out the graphics (fonts) from Lasse Thoresen´s system “Aural Sonology”[2](Music, 2018, n.d.). Since Thoresen’s system is more a pedagogical tool used to describe the analysis of sonic and structural aspects of music-as-heard, it shows little detail as to what those sounds really are. Therefore, turning to be impractical for my purpose.

Practical and Artistic Aspects

The following writings concentrate on the graphic notation research. This being an essential part of the pre-compositional preparation. An important part of the process for the realization of ConDiS, its use, and possibilities.

Compositions Experimenting with Notation

To realize these additional instructional features, experimental pieces or studies were written. Focusing on a specific factor of primary control, i.e., volume, spatial location, and sonority. Various “new” graphics for compositional and conducting instructions needed to be developed. These graphics were later tested via live performance. In that manner, I could better realize their clarity, practicality, and accuracy.

Etude for Voice and ConDiS

Etude for Voice and ConDiS version 1.0

The first attempt to develop and extend personal style of mixed electronic and traditional notation was realized through an experimental composition named Etude I for Voice and ConDiS. Voice was chosen since I could easily use my own voice and therefore did not need to rely on external assistance. Using a wireless headset, I was able to move freely use my voice as an instrument and work on live control of volume, pan and sound effects using an early version of ConDiS.

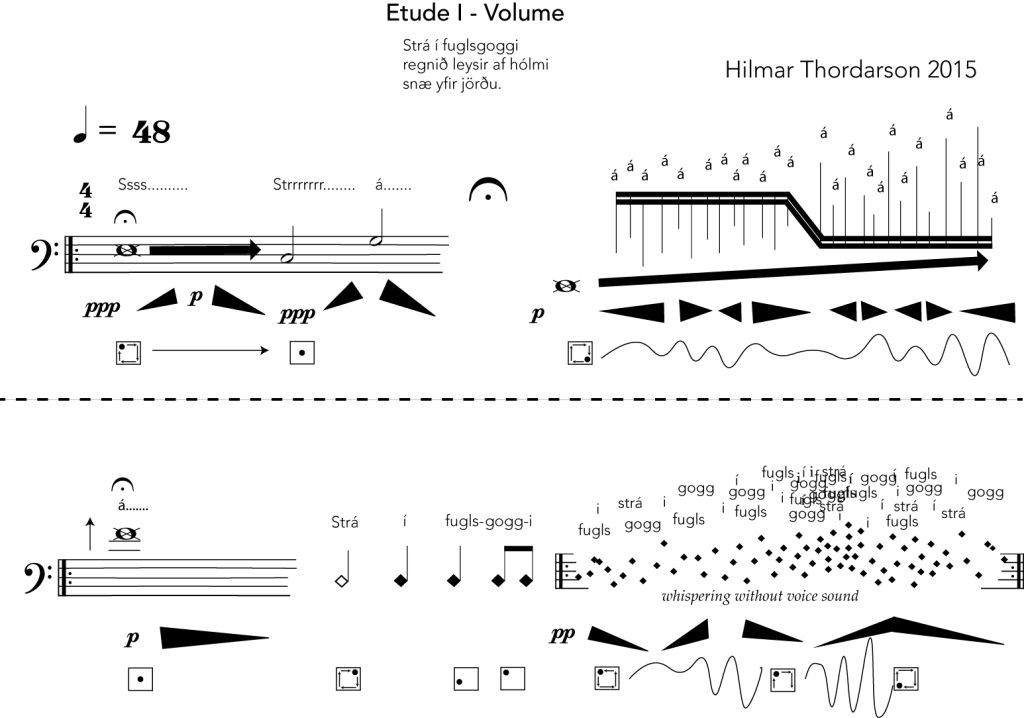

Figure 36. Etude for Voice and ConDiS first version.

Figure 36 shows the opening of the first version of Etude I with a subtitle Volume indicating that this exercise is focusing on live interactive controlling of the volume of the electronics.

A Haiku style poem by the Icelandic poet Pjetur Hafstein Lárusson, “Strá í fuglsgoggi” is chosen since the form of Haiku, and the phonetic transcription of Icelandic gave a sudden degree of freedom and inspiration.

The score is written in Adobe Illustrator since all of the commercially available music notation software such as Sibelius and Finale are not flexible enough to meet the needs for this kind of experimental composition.

Notation Analyzes.

At the very beginning, the performer makes a very soft ppp Ssss….. sound on any low pitch and holds that sound for some time (fermata sign) while making a crescendo to p and then diminuendo back to very soft ppp before making the Strrrrrrr…… sound with similar crescendo and diminuendo. Thereafter, the performer sings a given note C (or a note close to that C) and then a note a pure 5thhigher on the letter “á”. After that there is an un-timed pause (fermata), before singing the next phrase beginning with a very deep pitch, followed with short jumping notes, moving upwards in pitch, all based on the letter “á”.

In the second line, the vocalist sings a very high long note on the letter “á” before whispering the words “Strá í fugls–gogg-i” using the duration of the diamond-shaped notes (half-note, quarter-notes, and eight-notes). The following diamond notes are all whispered with the use of spatial notation to indicate pitch and density. This phrase is repeated as often as needed.

Volume Control Analyzes

Before starting the performance, the vocalist needs to activate the ConDiS volume control by making an OK sign with the conducting glove (ConGlove). Then he needs to gradually move the left arm upwards to follow the written crescendo and then a decrescendo of the electronic volume. That way the voice and the electronics have a parallel volume change.

The next phrase has written crescendos and diminuendos for various time duration ranging from soft volume level p up to unspecified volume (left to the performer to decide).

Next line begins with a long high note that starts with the volume level p diminishing to silence before an extremely soft whisper of “Strá í fugls–gogg-i”. During the final phrase of this page, the volume ranges from very soft up to unspecified volume indicating space between volume signs as stable points.

Pan Control Analyzes

To activate the pan control, function the conductor needs to give a thumb up sign and then move arm or wrist in circles accordingly to written indications in the music score. The little squares with dot and arrows are written to indicate the sonic location (pan) of the sound, meaning that at the very beginning the sound starts in the left front loudspeaker and moves linearly clockwise in a circle until it stops in the middle at the “Strá” singing. During the next phrase áááááá… the sound continues to move in a circle, but its process is interfered with left/right up/down motion of the wrist making the sound move in non-linear motion. The non-linear motion is indicated with lines simulating the motion. During the very high pitch in on the long-held note “á”, the sound location is in the middle. The open diamond note in “strá” the electronic sound appears in the front right speaker moving linearly clockwise until it appears without a move in the left rear in “fugls” and left front in “gogg-i”. The last phrase is similar to the last phrase of the first line except the sound move is denser with short breakpoints where the hand motion stops for a short period before continuing.

Etude for Voice and ConDiS version 1.1

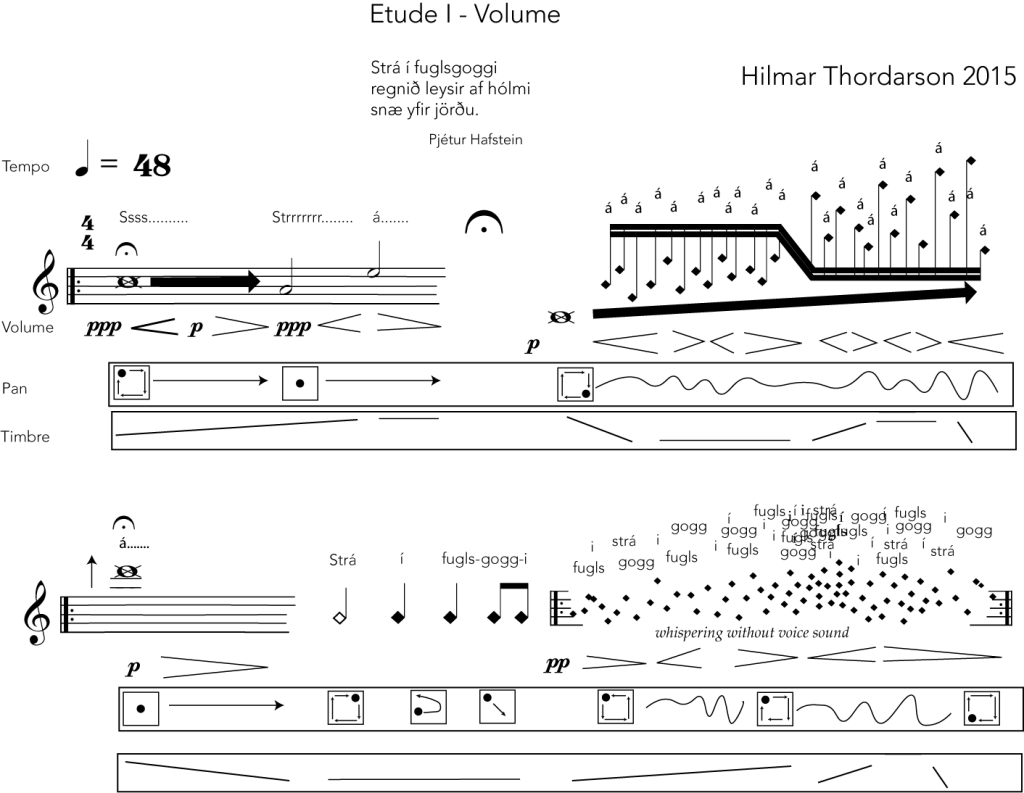

The next figure shows the same score with minor changes. The most noticeable is that a box has been drawn around the electronics sound signs and a “Timbre” has been added to the electronic score. Volume sign has changed, and more details added to the pan graphics.

Using box around the electronic sound made the score much clearer for the performer to read still keeping the close relationship between the electronics and the instrumental score. To simplify the score even further decision was made to leave out the black triangular volume indicators and use traditional hairpins notation. There was no need for specific electronic volume sign since the volume of the electronics followed or were parallel to the volume of the voice. With further developments of the ConDiS system, timbre control became available and had to be integrated into the score. Use of linear motion inside a box was chosen especially because it was different from other signs (symbols) and was thought to give a correct visual image.

Figure 37. Etude for Voice and ConDiS Version 1.1

Etude I for Voice and ConDiS was an important experiment especially since it, literarily speaking, allowed me to use my own voice to test and discover the possibilities I had in my hand. It helped me to understand and get a feel for what would happen with many different actions.

Experiences and Results

Experimenting and performing Etude for Voice was an important step towards the development of the ConDiS system. Learning to use the “ConGlove” to control various parameters with sign language based on finger combination as well as moving the sound levels and location with left arm was a thrilling experience. Hours were spent in the studio improvising using own voice mixed with electronic sound effects controlled with the conducting glove. The sound moved accordingly to wrist movement from one speaker to another although if moved too fast a little spike or click sound appeared. Its volume level vent up and down accordingly to the arm movement. Timbre or sonic changes were made using the Hadron Particle Synthesizer[3]providing control of various synthesis parameters. It was a fun to play with, it gave me the touch and feel of its potentials. But I was not able to follow the instructions of the notated score. It turned out to be too complicated, I was unable to do all these various arm, hand and finger gestures while simultaneously reading the score.

I was unable to control at the same time volume and sonic changes; how would I increase volume and decrease sonic changes with my arm movement since I only could do one gesture at a time? How could I move the sound location in space with my wrist movement without moving the volume level or sonic changes? I was unable to synchronize the “score” and the computer sounds since there was no way to send a “score following” information to the computer or to turn on/off any of the controllers.

Therefore, new methods and simplifications were needed.

Etude for Percussion and ConDiS

Although the first experiment to write instructions for the conductor to conduct her own performance was partially unsuccessful, I decided to move to the next experiment. To write a piece for one performer and a conductor wearing a new version of the “ConGlove.”

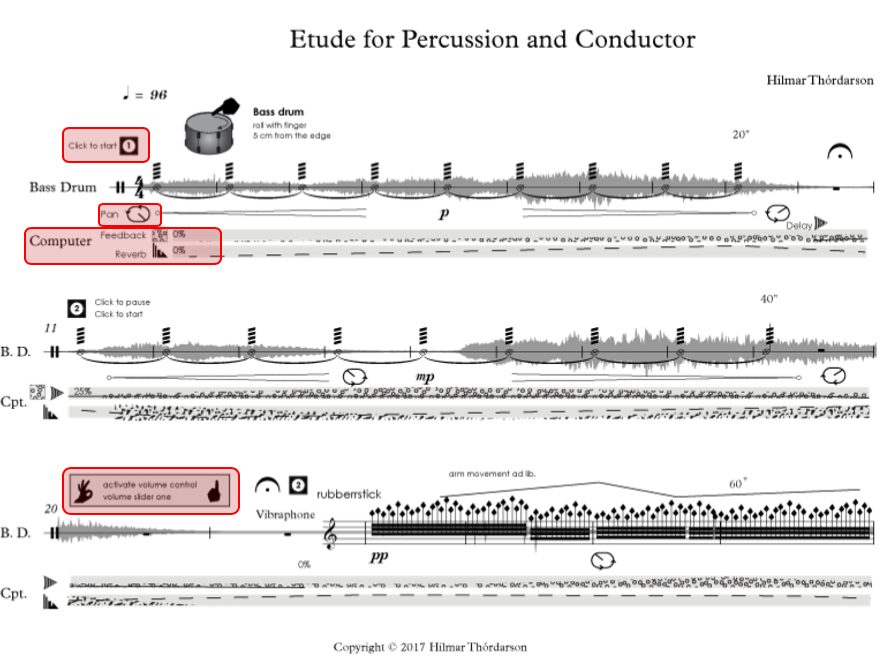

The outcome was Etude for Percussion and ConDiS illustrated below.

Buttons Signs in the Musical Score

Many changes made from the notation of Etude for Voice and ConDiS. Adding button function to the “ConGlove” being the largest breakthrough. Now I was able to notate where the conductor starts and stops the performance, where to synchronize playback of the DAW and the live performer all with click of various buttons. With more than one clicks I was able to set the metronome (tempo) of the piece and jump back and forth between queue numbers. The button icon, black square with number surrounded by a white circle was a clear design easily understood by the conductor.

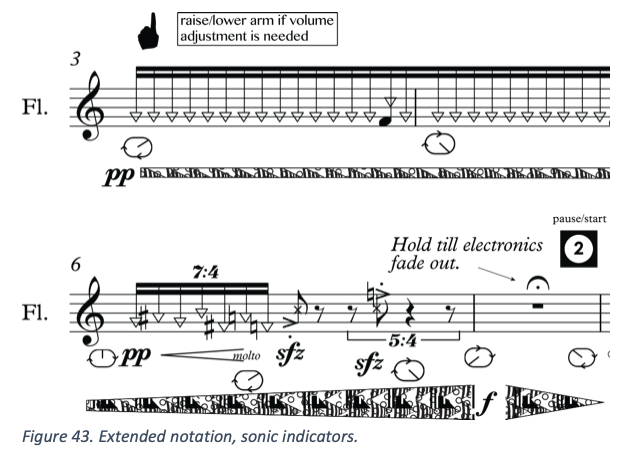

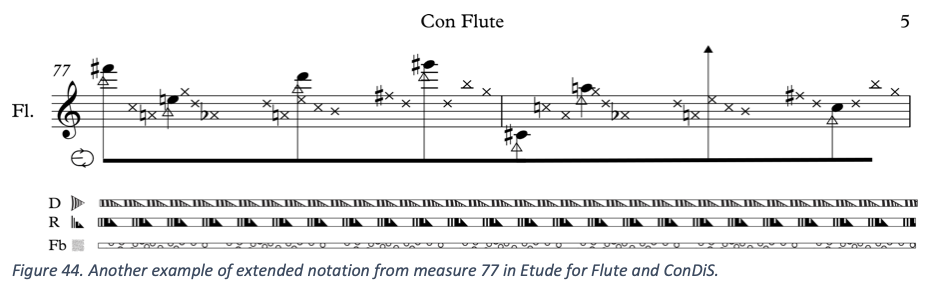

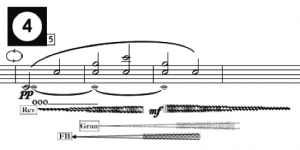

Visual Audio Files in the Musical Score

Another change was to write a copy of a recorded audio file of the electronics so that the performer could visually follow the audio of the electronics. Adding a picture of the electronic audio, I believed would help the performer to see and feel the accompanying electronics. Putting the audio files behind the staves, I considered both easier for the performer to read and at the same time save considerable space on the manuscript paper.

Figure 38. Page 1. from Etude for Percussion and ConDiS

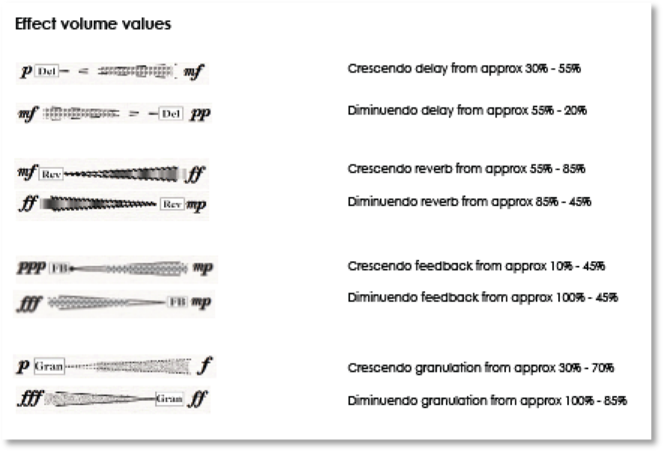

Sonic Changes

Although the sonic signs i.e. volume, pan and timbre used in Etude for Voice and ConDiS were quite clear and usable a totally new version was not needed. The “new” design being much closer to traditional notation. Another important facture is the sonic changes are notated for the performer and conductor as indications of what is written as automation in the computer part. They are not written in the score as instructions for the conductor to raise or lower her arm or any other interaction with the performance. In Etude for Percussion and ConDiS three fundamental effect units are used, delay, reverb, and procrastinate an audio effect that creates cascading pitch and delay effect, here named feedback since it has a feel of feedback. The “hairpin” shaped icons are used to indicate how much of the effect is used, spanning from 0% to 100%. In the case of a delay effect, the 0 to 100% would indicate the level of dry/wet and feedback added to the effect. For Reverb it indicates the decay time of the reverb or in other words how long time it takes the reverb to fade out and for the procrastinate the hairpin indicates the volume of the effect or how loud it can be heard.

Many composers including composer Kaija Saariaho indicate the use of electronic effect with a hairpin indicating the effect at the beginning and then draw a hairpin indicating the volume.[4]

![]()

Figure 39. Effect notation used by Kaija Saariaho

In Etude for Percussion the design is taking a step further and try to indicate the effects by using a designated pattern for each effect. The intention is to make a bit clearer distinction between the various sound changes.

Figure 40. Notation of electronic sound effects in Etude for Percussion and ConDiS.

Figure 40. Notation of electronic sound effects in Etude for Percussion and ConDiS.

That way giving the performer and conductor a better visual feeling for the sound they were hearing.

Visual Audio

To add a copy of a recorded audio file was an attempt to make it easier for the conductor and the performer to connect ear and eyes, giving a picture of the sound and sound value as they perform. The image is placed behind the notes to save space and to make it easier to connect the written notes and their sonic outcome.

Sonic Location (panning)

Slight changes were made for the sonic location or the panning sign. These changes were made to simplify the graphics as well as moving closer to commercially used graphics in the world of DAW showing a button formed icon with a line for pan location.

The square shape sign has changed to more oval sign with arrows on the edge lines to indicate pan motion. A sign moving from left to right indicates move to left and right and wise versa.

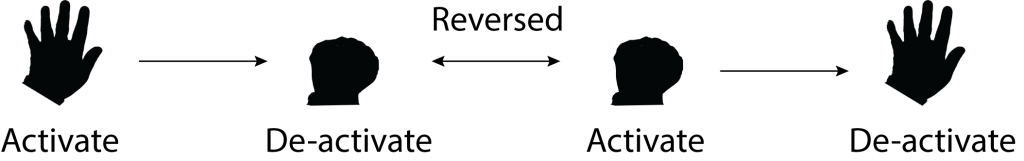

Sign Language

The introduction of sign language instructions for the conductor is another addition to the score writing. These can be seen as an OK sign, thumb up sign, fist sign, little finger and index finger up signs. For more detailed writing on the use of sign language, (see Gestural Function p. 52).

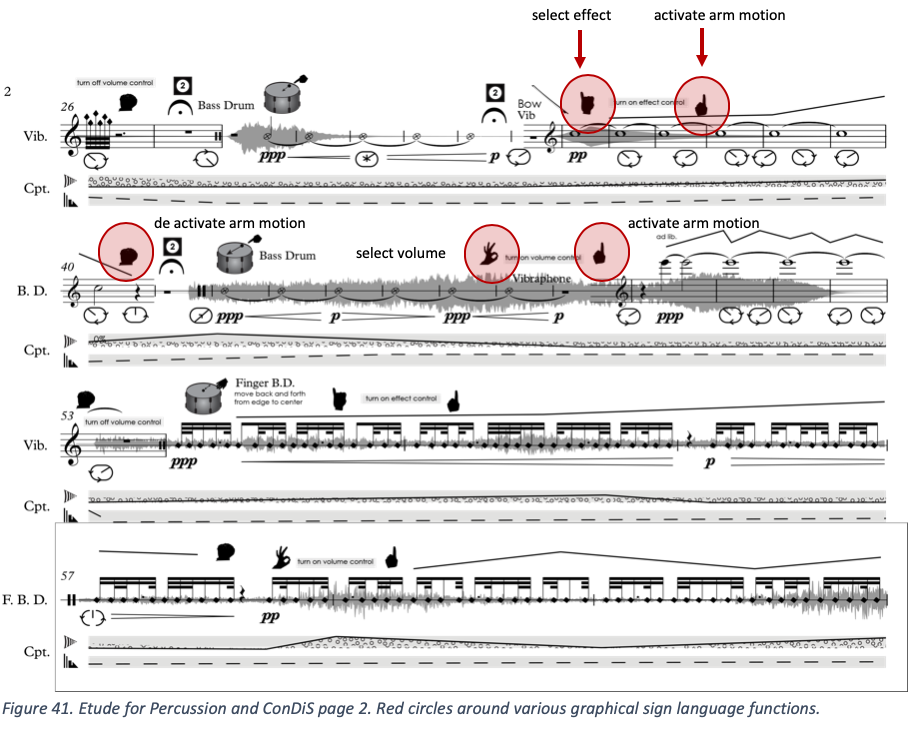

Figure 41. Etude for Percussion and ConDiS page 2. Red circles around various graphical sign language functions.

These different finger signs are all written for the conductor to “tell” the DAW which controller to be activated and when. For instance, in measure 33 when the bowed Vibraphone enters (red arrow), the conductor has to give a little finger sign to tell the DAW that she is going to control the effect unit. The conductor then has to give an index finger sign to activate level changes and then move her arm up for crescendo and then down for diminuendo, following the drawn line before deactivating the control by closing her hand shown with fist sign. The closing hand function to turn off activation was later reversed to turn on an activation. The OK sign activates the volume control while a thumb up sign activates a pan control.

Experience and Results

The notation used for Etude for Percussion and ConDiS was quite successful, for conducting, all was clear and simple to follow. The performer did not complain but felt that the recorded audio files could either be placed below the notes or not used at all. He felt that it was difficult to read the notes and preferred regular use of volume expressions such as p and f. The sign language worked fine although I started to realize the limits of conducting information. It took time to get used to the sign language and to remember the various functions. I also felt the graphics were too busy, they did work fine but this was a piece for solo instrument what if they were more?

Etude for Flute and ConDiS

The third and last piece in the sequence of experimental pieces for the ConDiS system was an exercise for Flute and ConDiS. There are less changes in the score writing graphics due to experience and praxis from previous experiments.

Sonic Notation

As can be seen, slight changes have been made for the sonic notation. The icons are unchanged but the way of using it is now closer to the use of hairpins as in classical notation and classical volume expression signs are now (p, mp, f) are introduced.

For me to use % to indicate sonic changes was not satisfactory since it did not look of feel very musical. Why not use the same language for these indicators as we use in traditional music notation? Therefore, I decided to indicate the volume of the effects with musical signs such as p, mf, f, etc. that could easily bee transcribed to % as ppp could be less than 10% and fff between 90 and 100%. I still use the number one (1) icon to indicate the first button which is located on the index finger of the conducting glove. This was later to change deciding to use same button numbering for the buttons as used in traditional piano fingering with thumb as the first finger and index finger number two. Hence a different numbering appearing first in Kuuki no Sukima.

Extended Instrumental Notation

In this work Etude for Flute and ConDiS, more emphasis was placed on extended notation than in previous works. I was looking very closely at extended notation of Luciano Berio[5]and Kaija Saariaho[6], as well as Robert Dick´s[7]book The Other Flute (Dick, 1975).

It was important to exercise my own language to get the feel for the blending of various flute sounds and electronics.

Making a virtual computer version of the piece, I managed to do experiments with a mix of the flute and electronics as well as practice conducting the score. Flutist Trine Knutsen gave me very important feedback about notation and clarity, as well as instructions of physical limitations of the instrument and its performer.

Video example #1: Etude for flute video simulation (mockup) with score follow animation.

Learning by Doing

Experimenting with various form of electronic and extended notations through the “Etude” compositions gave me a good feel for my extended musical language. I felt as I had gained enough practice to keep on moving forward and start pre-compositional work for Kuuki no Sukimamy main composition using the ConDiS system. Although still having few unanswered questions concerning extended string writing it was too time assuming to write more Etudes. These experiments had to be done through pre-compositional process.

Thanks to double bassist Michael Francis Duch[8]who’s advice and instructions performing sounds unknown to me was an eye-opener. Through our meetings I got introduced to other composers including double bassist Stefano Scodanibbio[9]who’s extended notation for double bass had a deep and lasting impact on my choice of extended string notation. Especially Stefano´s notation used in “E/Statico con contrabasso solo”.

Artistic Research Lecture and Concert

Experiments and Practical Studies

Working with Conductor Arne Johansen

A series of practical studies, first based on my own conducting practice and then involving conductors Arne Johansen (Jonsvatnet Brass) and Halldis Rønning (Trondheim Sinfonietta), led to the conclusion that there are limitations on how much information the conductor can deliver to the performers (including the computer) during a performance.

The work with Arne Johansen involved him conducting a five-minute “conventional” composition adding real-time control of volume and panning. We met three times at my office where I explained the functions of ConDiS. Then we would practice with computer simulation for about an hour each time. The first objective was for him to learn the four basic signs for volume and pan control. There was initial confusion, however, when it came to conducting controlling signs. Arne tended to give an OK sign (which is used to activating volume control) by using his thumb and middle finger, while I use my thumb and index finger. When shifting between volume control and pan control, i.e., between the OK and thumbs up signs, there was sometimes confusion such that the computer would not deactivate one while shifting to the other, or it would activate both. That had not happened during my own trial conducting and it ultimately had to do with the difference in the way we moved our fingers from one sign to another. It so happened that Arne was preferred being able to control both—volume and pan—at the same time, therefore a decision was made to allow him to do so. Arne was quick to learn the sign combination and add it to his conducting technique. He managed to practice individual control of two separate groups, which was done by using the index finger for group one and combined index/middle finger for group two.

Stage setup

Jonsvatnet Brass was staged center stage and divided into two groups using two overhead microphones, one for each group. One microphone faced to the left for group one, and the other faced to the right for group two. The conductor would then activate either microphone, that for group one (left side) or that for group 2 (right side), using the finger gesture with just one or two combined fingers. There was substantial leakage between the groups due to the close proximity of the microphones. The microphone input level had to be adjusted to very low to prevent unwanted feedback. There was subsequently not much room for electronic output.

Preparation and practice time with the Jonsvatnet Brass was limited, as is too often the case in the mixed media situation. Two rehearsals plus a concert was not enough time to perfect the quality of the sound and the mix of the acoustic and electronic sounds. Nor did this provide enough time to explore sufficiently the potential inherent in the ConDiS technology. Nevertheless, the concert performance was an essential milestone in the research process as it demonstrated the system’s constraints and potential.

Experience and Results

Although the concert went fine, meaning there was no technical breakdown, the artistic result was far from satisfactory. Having the brass band split into two groups and using one microphone for each group proved totally unacceptable:

- It was difficult to hear and differentiate the electronic sound of the two groups.

- The electronic sound level was too soft due to feedback problems probably caused by microphone and speaker placement.

- The electronic sounds were too complicated and too processed, and their appearance too random. It was therefore difficult to hear them and understand the relationship between the instruments and the electronics.

- Moving the sound around in space with the conductor making a circular motion while holding up his left arm sounded good but looked awkward and made the conductor look more like a cowboy waving a lasso than a conductor.

One valuable outcome from this study was undoubtedly the reassessment it prompted of the realistic use for ConDiS and of the classic role of the conductor. Another valuable lesson was the improved understanding of the importance of a clearer relationship between the sound source (instrument) and the electronic sound (digital sound process of the instrument). This lesson is most directly applied to the placement and use of microphones and loudspeakers.

Arne did well, activating the control of the two groups, adjusting the volume level, and moving the sound around. However, the ConDiS software made too many mistakes shifting between the volume, pan, and group 1 and 2. These mistakes did not harm the performance since I could correct them manually, but it became clear that revision was necessary. In this instance Arne was conducting a short, simple, straightforward composition that left him ample time to concentrate on conducting the electronics. Nevertheless, it turned out to be plenty of work for him.

The experiment with the Jonsvatnet Brass and conductor Arne Johansen was an invaluable experience in the development of ConDiS. It made it absolutely evident that future use would depend on simplifying the conductor’s control of the electronic parameters.

Experimental Work with Trumpetist Erik Kimestad

Collaboration with Erik Kimestad was conducted through Internet-based communication. He would send me audio files of various sounds he created with his trumpet. I then experimented with the sound using various gestures and button clicks of the conducting glove, reporting back to him

Figure 45. Facebook ad for the Research days in Kristiansand.

which sounds worked better than others. That way we could prepare for the concert to be held at the “Research Days” festival at the University of Agder in Kristiansand, Norway. Having a few hours to rehearse in person before the concert provided us with enough time to construct the performance. With Eric standing on stage and me in the audience facing him, we improvised performance of around 30 minutes. Erik blew his horn while I sculpted the sound in real time with finger signs, arm gestures, and button clicking.

Experience and Results

It was a unique experience to be able to “grab” the sound from the trumpet, sculpt it, and move the sound in space using the “ConGlove” conducting glove. At the same time, through this experience, I became ever more convinced that having a conductor doing all those things for my composition Kuuki no Sukima(then in the making) was not what I wanted. Doing everything I was doing before and during this performance was a full-time occupation and there was not even a written score to worry about. Even though it was evidently possible to add these features for the conductor, it was visually disturbing. The effect would be too disturbing of the atmosphere I hoped to create with Kuuki no Sukima, and it would have ended up looking more like a circus act than an example of serious conducting.

Working with Conductor Halldis Rønning

Leaving out Pan and Effect Control

Work with conductor Halldis Rønning started in early October 2017. I was soon able to confirm my suspicion. My expectation that the conductor could “grab” the sound, throw it into the air, and make live sonic modulations was unrealistic. Although I had proven it to be technically possible (see a demo video of pan control and Fx control), it was not the job of the conductor. Therefore, after a Skype meeting with Halldis, the panning and effect control functions of the interface were disabled.

Figure 46. The conductor interface before October 2017 with pan and FX control.

Figure 46. The conductor interface before October 2017 with pan and FX control.

Figure 47. The conductor interface after October 2017 without pan and Fx control.

In the subsequent weeks of our collaboration, Halldis was given more and more detailed versions of the score of Kuuki no Sukima, leading up to the point where we were ready to schedule a meeting in Trondheim on October 31stfor an intensive workshop. That included reading through the composition with playback from a mock-up[10]version. This would allow Halldis to rehearse conducting the performance while following written instructions on when to start and stop, when to change tempo, and how to change the overall volume value when needed.

The workshop with Halldis that day involved more than rehearsing, conducting, and talking through the functions of the ConDiS system. It also meant walking through the notation and graphics used for electronic sound indication and extending instrumental technique. In Kuuki no Sukimathere is substantially extended instrumental technique. Therefore, in the pre-compositional process, there was an urgent need to explore new lands of instrumental sonority and experiment and explore the possibilities of various types of sonority. Hence the title Kuuki no Sukima – Between the Air.

To get a better grasp of the extended notation used in sonic experiments, three performers with the Trondheim Sinfonietta, flutist Trine Knutsen, double bassist Michael Francis Duch, and percussionist Espen Aalberg, were asked to be available that day to explore this issue through discussion and performance of some of the extended notation used in Kuuki no Sukima. The following videos provide examples from the workshop:

Sonic experimentation with flutist Trine Knutsen.

Video example #2: http://caveproduct.com/videos/Flute 2M_B62.mov

Sonic experimentation with double bassist Michael Francis Duch.

Video example #3: http://caveproduct.com/videos/DB_2M_B86.mov

Sonic experimentation with percussionist Espen Aalberg.

Video example #4: http://caveproduct.com/videos/KnoS_ext_perc.mp4

Much to our significant relief, the conducting rehearsals and sonic experiments went very well. Halldis felt quite comfortable using the conducting glove while conducting a mock-up simulation of Kuuki no Sukima. Unfortunately, there was not enough time to rehearse and experiment with the electronic sound extensions of those instruments, with the negative repercussions having too little rehearsal time with the electronic sounds before the first concert.

Experience and Results

In addition to confirming my suspicions about restrictions placed on conducting, our workshop revealed a few other things that needed to change. One was swapping the signs used for the activation and de-activation of the Volume Value Control. Originally the conductor opening up her hand to activate and closed the hand (fist sign) to deactivate. This turned out to be uncomfortable (unnatural) for Halldis and they had to be reversed.

Figure 48. The on/off function reversed.

Another discovery that was a bit disappointing was that my use of altered hairpin signs to indicate effects was not very meaningful to her. She was not familiar with reading a musical score with an increased delay or decreased feedback graphics.

Figure 49. Hairpins designed for various effects.

Halldis felt this information made the musical score more complicated to read. Instead, she recommended a simple way to indicate the overall loudness of the electronics, hence audio wave graphics were written into the musical score to indicate the overall audio level.

Figure 50. Graphical illustration of the overall audio level

This was an unexpected discovery and led to the conclusion that two kinds of scores were needed.

Two Printed Versions of a Musical Score and a Book of Instructions

Conductor Halldis Rønning and I decided to print two versions of the score for Kuuki no Sukima. One contains all the electronic graphics and the other features none aside from the audio graphics indicating the overall volume. The score including all the electronic graphics is named “Composer-Conductor” and the other is the “Performance Score.” Instead of adding all instructions to the score as is traditional, i.e., instrumentation, notation, and instructions, a decision was made to present these as a separate file (book). The reason behind this decision was that the score itself is already quite a few pages in length and if everything else were included, it would be too long and bulky. It also helps to have the instructions in a separate book since it adds to the clarity of the work. In other words, there is aesthetic value in keeping the music and the technical/technological information separate.

This was a somewhat disappointing conclusion, since I really thought that I had come up with a simple solution to extend the classic notation vocabulary. It was my belief that using similar signs to express changes in electronic effect values would be the perfect solution. This still is my belief. One likely explanation for its not working in this particular context is the fact that the altered hairpin signs were too alien to Halldis and making them a part of her score reading practice would have required more time than we had.

Over the course of this period of research, I have developed a system for writing that I understand and can use to express my compositional needs. I have also created a means to extend my own compositional aim. That is, I have found a way to write the electronic effects with the same precision as the instrumental notes—a way to have them conducted and performed with the same expression.

Therefore, having to write two versions of the same score was a small price to pay and a solution that I am able to accept, at least for the time being.

Expanding Artistic Need

Musical Intention

Looking at the way in which the Kuuki no Sukuma is written, it appears at first glance to be traditional. Standard staves for each instrument, which are then sorted conventionally with relevant information about speed and volume. The notation is somewhat traditional, although with exceptions where it uses extended notation. What then makes this score different? What makes the score different from most other scores written for mixed music is how I have chosen to write electronics into the score.

The way I write has much to do with my method of composing mixed music: The fact that I like live, real-time sound processing of each instrument in the ensemble; the fact that I like to process the instrumental sounds and the electronics with as much precision as possible.

It all feeds into my intention to find a way to compose and perform mixed music in a larger format while maintaining a level of precision that fulfills my musical aspirations.

I do not look at the electronics as separate instruments. I choose instead to look at them and treat them as extensions of typical instruments, i.e., extended instruments or hyper-instruments. Their sonic material is constructed on top of their instrumental sound, for instance, the electronic sound source associated with the flute is the sound of the flute processed in real time. A sound that starts as a voiced flute sound and then gradually changes its sonority would be written as such:

Figure 51. Kuuki no Sukima 1st movement. m.50-54.

Figure 51 shows the flute playing a harmonic overtone while simultaneously singing the tone C. At the beginning, there is a pure flute and voice sound that gradually changes sonority; its sonority is extended as the electronic effects fade in (out).

To be able to hear these electronically processed sounds in my head the same way I hear the written pitches of the instruments, I need to write them down parallel to the instrument notes. I therefore write all sonic changes right below the respective instrument. That way I can see just by looking at the musical score how the sound progresses through time both with respect to both the given instrument and the whole instrumental group. This need for connectedness explains why I do not write these changes on a separate electronic line, as is often done in mixed music.

It may be somewhat confusing that I express my need to treat the electronic sounds as if they were individual instruments while at the same time talking about the electronics as a sonic extension of the instrumental sound. I use this expression in relation to how I perceive the interplay between the instrumental and electronic sounds. I hear the electronic sound as an offspring of the instrument, closely related but, at the same time, able to maintain its independence with respect to time, space, and volume. That is why, when looking at the score, all electronic effects, their location in space (pan), and volume are written on each instrument staff.

My intention in the ConDiS project is to capture the interplay that happens in mixed music composition and performance. The interplay between the written instrumental instructions and their associated electronic sounds. The interplay between the written score and the performer. The interplay between the performer and conductor, and even the interplay between the performers and audience.

Testing Sonic Relation

Instrumental and Electronic Sound

During the composition process, interesting complications arose when testing out the sonic relation between the acoustic instruments and the electronics.

Firstly, there was the unfortunate reality of working in the media of mixed music, where there is often very limited time available to work on the electronics with the performers. Although I did meet with most of the players on an individual basis, there was little time to adjust the electronic sound effects. The reason? Too much time had to be spent experimenting with the extended instrument technique, the physical, notational, and sonic aspects. This meant testing out how this or that concept sounded in practice. These experiments and adjustments are very time-consuming and left no extra time for adding additional experiments with the electronics. Therefore, all of the experiments involving the electronic extension of the instrumental sounds were done with mock-up. This method is far from perfect, especially because most samples libraries have very few extended techniques, which typically leads to relatively poor results.

Secondly, conducting mixed sonic experiments and tests requires studio time and studio preparation. In my case, it meant moving personal gear to the studio, including loudspeakers, to set up a quadraphonic audio system. This type of mixed sonic studio trial was done with the percussionist performing Etude for Percussion and ConDiS, but it turned out not to be worth the time spent. There was feedback trouble, and watching the computer while wearing the conducting glove to be able to conduct the sound proved complicated. Cueing up the score and the DAW was particularly troublesome, especially since I had not yet developed the use of buttons to jump back and forth. Therefore, I decided to do most of the sonic experiments and tests using computer simulation or mock-up at my office desk.

Video example #5: Extended violin test.

http://caveproduct.com/videos/Vln_verttrem.mp4

[1]An indirect quotation to the American composer Morton Feldman who liked to compare his selection and treatment of notes to care of children.

[2]Aural Sonology (auralsonology.com) is an approach to the analysis of sonic and structural aspects of music-as-heard, developed at the Norwegian Academy of Music.

[3]Synth, sampler and effects processor developed by Øyvind Brandtsegg at Norwegian University for Science and Technology (NTNU).

[4]It should be noted that these indications are from the dates when it had to be done manually on hardware. When using Max/MSP patches for performance Kaija Saariaho has said to disregard them as it is irrelevant and in some cases contradictory to what is happening.

[5]Luciano Berio (1925-2003) Italian composer known for his compositional experimentations.

[6]Kaija Saariaho (1952) Finnish composer known for her mixed music compositions.

[7]Robert Dick (1950) American flutist, composer, improvisor, and inventor, known for his writings on extended flute technique.

[8]Michael Francis Duch (1978) an experimental composer and improvisor.

[9]Stefano Scodanibbio (1956-1912) Italian double bassist and composer known for his use and notation of external Double bass technique.

[10]Mock-up is a term normally used when playing MIDI simulation of a performance via computer.